TESTING AI SOLUTIONS

April 18, 2019

Artificial Intelligence (AI) in one guise or another is beginning to permeate our everyday activities. Whether it’s a visit to the doctor’s office to get an initial diagnosis of eye problems or trying to find a new job, AI is either already making decisions on your behalf or will be in the not too distant future.

Consider first the Doctor’s office. DeepMind, a UK based company, has built a prototype that can diagnose complex eye diseases such as glaucoma and diabetic retinopathy in real-time. Working with eye specialists at a leading hospital in London over the last three years their tool can detect these (and other) eye diseases with the same degree of accuracy as an experienced specialist clinician. The purpose of their product is to determine whether the patient presenting to their general practitioner (GP) with symptoms should be referred to a specialist. As this is a working prototype there is still some way to go before a version will be ready for regulatory approval. There will be a need to confirm its correct operation for clinical trial and ultimate rollout through rigorous testing before this time.

The second example considers Pitney Bowes, a business services organisation, that needed to recruit staff to handle the fulfillment of up to 44,000 parcels per hour. Their answer was to perform an initial screening of potential staff members using a chatbot that, if the preliminary questions were answered satisfactorily, scheduled a face-to-face interview and sent an email confirmation. But there’s a word of caution; Amazon abandoned its AI recruitment tool as it discriminated against women. This was because it focused on finding candidates that fitted the profile of the existing workforce, which is predominantly male, rather than on what they wanted.

So, there’s an issue. AI technology can clearly provide benefits to organizations and individuals when deployed correctly but we need to be certain that the outcomes that we’re presented with are accurate, correct and represent the outcome that we’re after. Traditional functional (and to some extent non-functional testing) isn’t the answer. This will always give you the same answer for the same inputs and doesn’t cope with the non-deterministic nature of AI and machine learning. As the intelligence is acquired during program execution the underlying machine model will need to be carefully monitored to ensure its correct and intended processing of data and stability, and robustness of operation within given usage limits. This also means that test data goes beyond the traditional input data considerations and must include additionally all other inputs to the system.

To achieve a stable, robust system, testing needs to be embedded at every stage of the design and development process. Furthermore, it needs to be iterative and deployed consistently throughout the entire delivery process and, as importantly, beyond into live operation. This will ensure the continued validation that the system behaves as intended.

There’s also the question of confidence in AI solutions. The EU has recognised this complex issue and published its Ethics Guidelines for Trustworthy AI (December 2018) which is currently a working paper. In it they identify ten requirements for trustworthy AI ranging from Accountability, Data Governance, and Design for All to Robustness, Safety, and Transparency.

The EU’s list of requirements reproduced and mine are in alphabetical order to reflect the equal importance of all requirements. It further recognises that the list is non-exhaustive perhaps reflecting the infancy of AI at the moment. There is also specific guidance on testing. In the paper, there is a distinction between technical and non-technical methods but fundamentally are all related to testing. The technical methods are:

- Ethics and Rule of Law by design

- Architectures for Trustworthy AI

- Testing and Validating

- Traceability and Auditability

- Explanation

The non-technical ones being:

- Regulation

- Standardization

- Accountability Governance

- Codes of Conduct

- Education and Awareness to foster an ethical mindset

- Stakeholder and Social Dialogue

- Diversity and Inclusive Design teams

If we look at traditional test strategies the focus is around testing and validation, traceability & auditability and standardization. To test AI solutions effectively our delivery oversight should extend to ensure the stakeholder & social dialogue requirements are addressed and of course, provide assurance that the Architecture supports a Trustworthy AI.

Diversity and Inclusivity are specifically called out in the Guidelines to minimise the impact of individual bias in the solution being implemented. We saw earlier that Amazon failed to deliver an AI recruitment solution by forgetting to test it with the right demographic. So now more than ever before there is a real need to drive inclusion and diversity throughout the delivery lifecycle including testing.

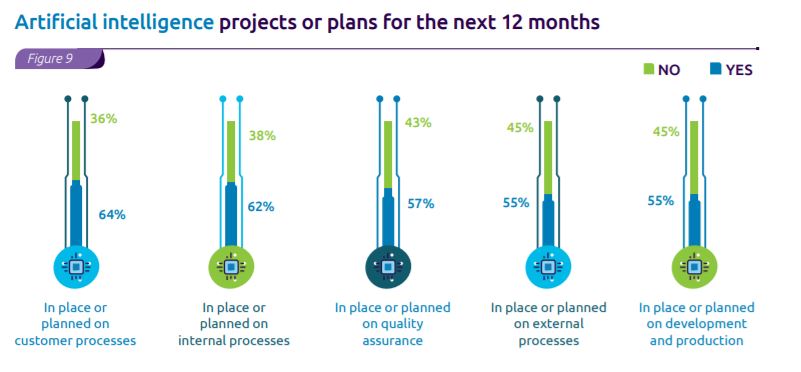

The latest World Quality Report indicates that over half of all organizations will be including AI somewhere in their customer or internal processes which means we need to act now especially as around a half have experienced difficulty in setting these projects up effectively.

Sogeti’s book Testing in the digital age – AI makes the difference – extends traditional testing to consider the new quality attributes that tackle intelligent machines. It also provides a five hop roadmap for implementing effective AI testing to get the journey started. These hops are:

- Automation and robots – considers how to use automation to test AI and robots

- Use the data – use data as the basis for the testing strategy as data is key to all AI solutions

- Go model–based – use model-based testing to build confidence in the solution especially around the more elusive characteristics

- Use artificial intelligence – consider using artificial intelligence to test your AI solution; but if you do you need to be aware that you are testing one system where you don’t know what it does with another!

- Test forecasting – in the future test engineers will need to forecast the new technologies coming our way so that we can be ready to test them in the right way

I think its clear that testing AI solutions is in its infancy and there will be refinements to approaches and guidelines as best practice emerges. We, therefore, need to remain open and inquisitive to get the best solutions to the new challenges.

English | EN

English | EN