RECOMMENDER SYSTEMS USING APRIORI – AN APPLICATION IN RETAIL USING PYTHON

February 4, 2019

Context

Have you ever come across a display section while browsing a book on an online portal: “Customers who bought this.., also bought this..” Then you have already experienced an application of Recommender system at work.

Problem Statement

Marketers are often tasked with finding key product pairs that occur together very frequently in shoppers’ carts, not purely by chance. This blog explains how this can be achieved using the Apriori algorithm. These product pairs serve as the basis to recommend another product to a customer, when he/she has already added one to cart.

Some popular examples of product pair combinations can be as trivial or logical as bread and jam, while others can be more surprising such as beer and diapers.

Technically speaking, the order of the products in a pair also matters. There’s always a dominant product in a pair e.g. whether bread sales leads to more jam sales, or vice versa, are two distinct cases, each getting a different score.

We will look at this in detail in the next section when we break down the Apriori algorithm.

Meet the Algorithm: Apriori

Apriori is an algorithm used to identify frequent item sets (in our case, item pairs). It works by first identifying individual items that satisfy a minimum occurrence threshold. It then extends the item set, by looking at all possible pairs that still satisfy the specified threshold. As a final step, we calculate the following three metrics that are central to this algorithm.

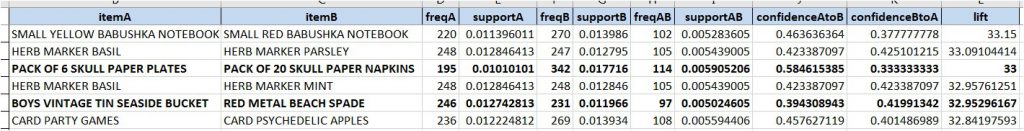

support: This is the percentage of orders that contains the item or item set. For example, if there are 5 orders in total and an item A occurs in 3 of them, so:

support (A) = 3/5 or 60%

confidence: Given two items, A and B, confidence measures the percentage of times that item B is purchased, given that item A was purchased. This is expressed as:

confidence(A->B) = support(A,B) / support(A)

lift: Given two items, A and B, lift indicates whether there is a relationship between A and B, or whether the two items occur together in the same order simply by chance (ie: at random). Unlike the confidence metric whose value may vary depending on direction (eg: confidence(A->B) may be different from confidence(B->A)), lift has no direction. This means that the lift(A,B) is always equal to the lift(B,A):

lift(A,B) = lift(B,A) = support(A,B) / (support(A) * support(B))

lift > 1 implies that there is a positive relationship between A and B.

(ie: A and B occur together more often than they would have appeared together purely by chance)

Technical Details:

All the data analysis is performed using Python Pandas. Pandas is a python library that offers data structures and operations for manipulating and analyzing numerical tables.

The following steps are explained below:

- The dataset containing the transaction records from a retail store is read into memory into a pandas dataframe: a data structure to hold tabular data in rows and columns. A new dataframe is created containing the list of all possible item-item pairs.

- New columns are added to the dataframe and populated using custom python functions created to calculate the three metrics – support, confidence and lift – defined in the previous section.

- those rows are filtered out that don’t match the minimum threshold for support, confidence and lift.

Leveraging the Power of Python:

For even a relatively smaller dataset of 1000 unique items, there can be roughly half a million item pairs (from elementary Permutations and Combinations). And keeping these values in memory can prove quite a handful for your PC. This is where a Python generator comes handy.

A python Generator is a special type of function that returns an iterable sequence of items. However, unlike regular functions which return all the values at once (eg: returning all the elements of a list), a generator yields one value at a time. To get the next value in the set, we must ask for it – either by explicitly calling the generator’s built-in “next” method, or implicitly via a for loop.

This is a great property of generators because it means that we don’t have to store all of the values in memory at once. This feature makes generators perfect for creating item pairs and counting their frequency of co-occurrence.

Conclusion:

With a smart implementation of Apriori using Python iterators and generators, a powerful solution is built to find product recommendations for Customers. Some popular examples of product pairs can be as trivial as paper plates and napkins (as seen in the sample output), while others can be more surprising such as beer and diapers.

Sample Output

References:

https://www.kaggle.com/datatheque/association-rules-mining-market-basket-analysis

For any further queries, pls. reach out to sogetiindiaaichatbotsblockchaincoe.in@capgemini.com

Co-authored by Rahul Sood

English | EN

English | EN