THE DIGITAL ECHO CHAMBER

January 20, 2017

In the wake of the 2016 US Presidential elections, many news organizations and at least one political party heavily criticized the dissemination of “fake news” and the impact it had on the election. Fake news can be defined as “deliberately publish[ed] hoaxes, propaganda, and disinformation, [and] using social media to drive web traffic and amplify their effect”[1]. Unlike news satire, fake news is generally seen as a tool to mislead, rather than entertain, readers for financial or political gain. It is the difference between Russia Today and The Colbert Report.

Fake news has undoubtedly fomented political controversy, but also led to the disturbing real news. One such example of the real impact of fake news is the so-called, Pizzagate. On December 4th, Edgar M. Welch, a consumer of fake news, entered a Washington, D.C. pizza joint and fired several rounds from his AR-15. This was ostensibly in an attempt to free child slaves he believed to be imprisoned in the basement of Comet Ping Pong by a sex trafficking ring led by Hilary Clinton. Of course, none of this was true, but it demonstrates the real danger of fake news. Fortunately, the internet is here to combat this problem.

Fake news has undoubtedly fomented political controversy, but also led to the disturbing real news. One such example of the real impact of fake news is the so-called, Pizzagate. On December 4th, Edgar M. Welch, a consumer of fake news, entered a Washington, D.C. pizza joint and fired several rounds from his AR-15. This was ostensibly in an attempt to free child slaves he believed to be imprisoned in the basement of Comet Ping Pong by a sex trafficking ring led by Hilary Clinton. Of course, none of this was true, but it demonstrates the real danger of fake news. Fortunately, the internet is here to combat this problem.

Lists of fake news sites;browser extensions that identify fake news sites,flag questionable Facebook posts and correct Donald Trump’s tweets have been created to combat this scourge.[2] While these efforts attempt to curtail a disturbing trend, they are merely addressing a symptom, not the root cause.

But what is the root cause? What is driving this phenomenon? While there are many contributing factors, there are a few that stand out – Network Effects, Dispositional Trust and Machine Learning.

Network Effects

Network effects, also known as Positive Network Externalities or demand side economics, are created when the scale is both the outcome of initial success and the engine for the further growth[3]. That is to say, a network effect is created when increased supply drives increased demand and, more classically, vice versa.

Early examples of this are the Greek agora or the Roman forum. More recently, the concept was formalized when, in 1908, Theodore Vail, then CEO of American Telephone & Telegraph (AT&T), argued for consolidation of 4,000 local and regional telephone exchanges into a unified platform under the American Bell Telephone company, the parent company acquired by AT&T in 1899. The larger network would offer more value to new and existing customers as they would be able to call much more people if on the American Bell network.

However, this consolidation and the underlying network economics led to runaway market share and, in 1913, the first of many anti-trust suits was filed by the US government, eventually leading to the break up of ‘Ma Bell’ at the end of 1983. This is the power of the Network Effect. Today, you likely contribute to network effects via many of the digital services you use today – Google, Facebook, Twitter, Uber, Microsoft Office, etc.

Due to network effects driving consolidation onto a few social media networks, information, right or wrong, true or false, is disseminated much faster than any other time in history. But there is something more.

Dispositional Trust

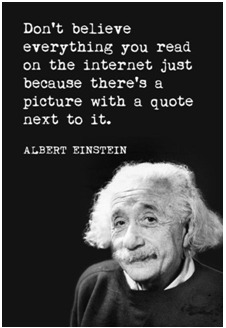

Most of us have heard the warning, “don’t believe everything you read”. And, when it comes to the internet, this is especially true. In fact, it’s probably smarter to not believe anything you read on the internet. Unfortunately, this is neither simple nor practical.

When at a physical newsstand, the difference between a broadsheet or legitimate news source and a tabloid is fairly obvious. The physical format, size, imagery, and fonts suggest its content is dubious and prior interactions with tabloids have predisposed you to this conclusion.

In this circumstance, we are conditioned to distrust the genre due to prior experiences. But trust can also be conceptualized as a cross-situational, cross-personal construct, encompassing characteristics of both the source and medium.[4] Following this trust typology, we can refer to this as dispositional trust.

The problem with dispositional trust is that one can be disposed to trusting information for reasons outside the information itself. Take, for instance, social media. If a friend or connection shares an article, we are predisposed to trust it more than if it had come from a random source. This sense of trust can also extend to the social network itself. Facebook can become trusted because of its association with an established trust network, e.g. your friends. This becomes a problem when we are less critical of content shared on a social network because of this relationship.

Machine Learning

If network effects concentrate the conversation, and social media creates an inherent trust of the information, machine learning effectively turns the volume in the echo chamber up to 11. (Note: there are simplifications ahead, but the premise is accurate.)

If network effects concentrate the conversation, and social media creates an inherent trust of the information, machine learning effectively turns the volume in the echo chamber up to 11. (Note: there are simplifications ahead, but the premise is accurate.)

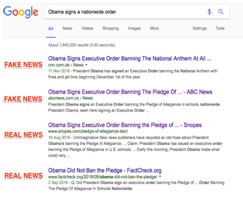

Page ranks on any social media site or searches engine use number of links and relative click-through rate (CTR) as some of the primary signals in their ranking algorithms. For each results page, they would expect a certain number of links to and CTR for each position on that page. If a result garners more links to it and a higher CTR, the result receives a higher page rank.

Now, for the publisher, a fake (or real) news articles success is measured on how many views it has. After all, advertisers on their sites are paying for eyeballs. More links = more clicks = more eyeballs. To solicit the highest number of links and clicks, fake news sites write provocative articles. These articles, it turns out, are more likely to be shared within a social network[5]. When the article begins to go viral, the CTR and number of links to it skyrocket. Recursive algorithms pick up on this and move the link successively higher on the search result pages.

Now, for the publisher, a fake (or real) news articles success is measured on how many views it has. After all, advertisers on their sites are paying for eyeballs. More links = more clicks = more eyeballs. To solicit the highest number of links and clicks, fake news sites write provocative articles. These articles, it turns out, are more likely to be shared within a social network[5]. When the article begins to go viral, the CTR and number of links to it skyrocket. Recursive algorithms pick up on this and move the link successively higher on the search result pages.

If you’ll recall, the defining characteristic of a network effect– “scale is both the outcome of initial success and the engine for the further growth”. Due to the virality of information dissemination on social media and recursive nature of many machine learning algorithms, we get a network effect on steroids. Pizzagate is just one recent example.

Pizzagate

On October 30th, an unverified account tweeted that the NYPD was investigating emails on former Congressman Anthony Weiner’s laptop. The tweet alleged the emails contained evidence of Clinton’s involvement in an “international child enslavement ring.”

Several months earlier, an anonymous 4chan user, who claimed to work in law enforcement, said something similar.

Next, Sean Adl-Tabatabai, a known conspiracy theorist, connected these dots and took it a step further claiming an “FBI Insider” had corroborated the claim that the Clinton’s were connected to a “Political Pedophile Sex Ring”.

Within 3 days of publication, the story had been shared millions of times and led a confused man to open fire in Comet Ping Pong in Washington, D.C. The confluence of social, psychological and technological phenomenon created a self-perpetuating echo chamber, whereby the story went viral and its’ veracity went untested by many.

There is, no doubt, plenty of blame to go around and no simple solution to the problem, but it is ultimately incumbent on all of us to seek contrasting points of view and think critically about what we read. To that point, I will end, ironically, with a quote falsely attributed to Aristotle on at least 10 pages of Google search results – “It is the mark of an educated mind to be able to entertain a thought without accepting it.”

There is, no doubt, plenty of blame to go around and no simple solution to the problem, but it is ultimately incumbent on all of us to seek contrasting points of view and think critically about what we read. To that point, I will end, ironically, with a quote falsely attributed to Aristotle on at least 10 pages of Google search results – “It is the mark of an educated mind to be able to entertain a thought without accepting it.”

[1] https://en.wikipedia.org/wiki/Fake_news_website

[2] https://fivethirtyeight.com/features/fact-checking-wont-save-us-from-fake-news/

[3] http://www.bearingpoint.com/ecomaXL/files/Global_Platform_Survey_Jan_2016.pdf&download=0

[4] Trust in online social networks: A multifaceted perspective; Sonja Grabner-Kräuter and Sofie Bitter; Forum For Social Economics Vol. 44, Iss. 1,2015

[5] http://jonahberger.com/wp-content/uploads/2013/02/Arousal2.pdf

English | EN

English | EN