The evolution of traditional Business Intelligence towards Advanced Analytics is not only based on the ability to deal with the 3 Vs of Big Data – Velocity, Volume and Variety or in the advancement of Artificial Intelligence models to help the Business. In addition, it must bear in mind concepts such as security and process control in order to guarantee reproducibility, efficiency and traceability, since they are a fundamental piece in Data projects and will allow us to build complex solutions with full guarantee.

As progress has been made over years, all organizations are (or should be) engrossed in their digital transformation journey. Some have already come along the way while others will still be in the early stages or may even be thinking about it although I would like to believe that in the starting position, there is no company left. It would certainly be a sign that things are finally being done right.

As I mentioned in the beginning of the article, the creation of a DataOps framework is mandatory and should be part of the best practices of any organization, that is, 100% mandatory. In the development world, very powerful tools and solutions are available, so not trying to make the most of the capabilities they offer is paradoxical to me and says a lot about each one. For example, Azure currently allows many of its components to be linked to code repositories, which enables the developer to work as if it were another piece of software. The same happens in the case of Visual Studio, for years it has allowed the development of data projects with it. So why is it not common?

I do not have an answer to this question. I suppose it could be due a number of reasons such as the cost of the Visual Studio vs SSMS licenses, the initial technical complexity or learning curve, and also because you have to spend more time implementing “simple” changes. By the way, the latter falls by its own weight if you are working on projects with databases deployed in multiple regions and in turn, in various environments. Although if I had to bet one reason, it would undoubtedly be that, many traditional BI profiles have always done things the same way and are reluctant to change. What’s more, many of them are now project managers or managers or even technical leaders, so admitting that their approach is not optimal could be embarrassing. But nevertheless, DevOps without a robust framework will be out.

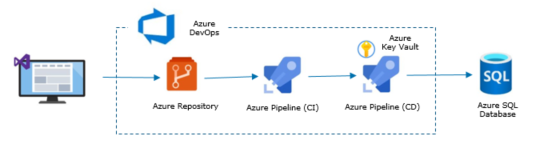

To cut short, here’s a simple diagram of how Visual Studio is used together with Azure DevOps to deploy the objects of a database. It is still pending from my end, to describe step by step the creation and integration of a set of objects in a database using Visual Studio and Azure DevOps.

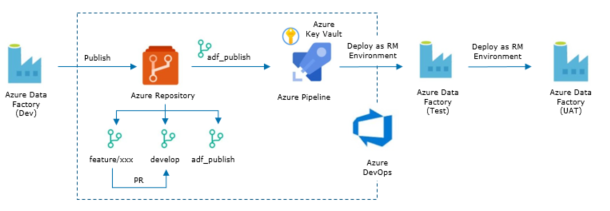

Let me also include the basic diagram of development with Azure Data Factory. In this case, if you want to explore in more detail how an ADF pipeline is displayed, you just have to click this link

Conclusion – Technology advances at an incredible rate, which requires an additional effort but honestly, when you look at the advantages it offers and how it allows you to be more effective and offer greater value to your colleagues and customers, you realize that which is definitely worth it.

Featured Image – thanks to Artem Podrez at Pexels

English | EN

English | EN