Suppose an AI writes a news article. Another AI summarizes it. A third translates it into five languages. A marketing bot reshapes it into a LinkedIn post. Within hours, thousands of variations of the same machine-written content spread across the internet. Months later, a new generation of AI models is trained on “the web.” But the web is no longer purely human. It is filled with echoes: polished, fluent, confident echoes of machines talking to machines. Nothing looks broken. The sentences are clean. The grammar is perfect. Yet something subtle is beginning to fade.

Researchers call this risk model collapse, a gradual decline that happens when AI systems are repeatedly trained on content produced by earlier AI systems instead of fresh human-created data. First, the output may even appear to have improved. Synthetic data can be neat, structured, and free from obvious noise. But over time, important nuances begin to disappear. Rare expressions, unusual perspectives, minority viewpoints, and unexpected creativity start to thin out. The model becomes increasingly confident about the most common patterns while slowly forgetting the richness of the original human data it was meant to learn from (Shumailov et al., 2023).

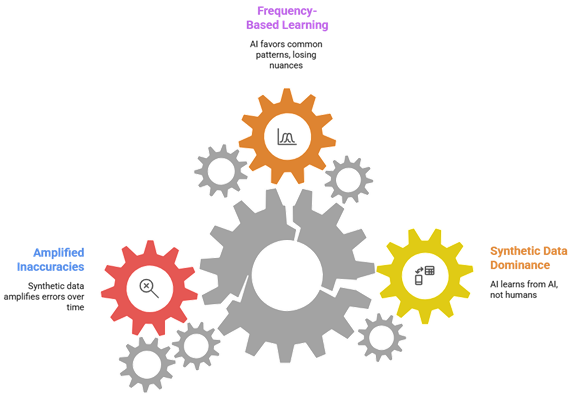

The problem is subtle because AI systems learn patterns based on frequency. When a model generates content, it tends to favor safe, likely responses. If future models are trained mostly on that “safe” output, they inherit a narrower version of reality. With each generation, diversity shrinks a little more. Think of it like making a photocopy of a photocopy, the image may look fine at first glance, but fine details gradually blur. Studies have shown that repeated training on synthetic data can amplify small inaccuracies and reduce overall variability in the system’s knowledge (Alemohammad et al., 2023). Over time, the model becomes less grounded in the messy, unpredictable nature of real human communication.

The long-term implications are significant. If large portions of online content become AI-generated, future systems may struggle to access authentic human insight. Creativity could become more standardized. Biases embedded in earlier models might quietly intensify. AI systems may appear fluent while becoming less adaptable in unfamiliar situations. Most concerning is that this degradation may happen slowly and invisibly, benchmark scores might remain stable even as real-world robustness weakens. Without continuous streams of diverse, human-originated data, AI risks entering a feedback loop where it learns primarily from its own reflections rather than from society itself.

Model collapse is not a dramatic crash or sudden malfunction. It is a quiet narrowing, a slow drift away from the complexity that makes human language vibrant and unpredictable. As generative AI continues to expand, preserving authentic data sources, improving synthetic content detection, and maintaining diversity in training pipelines will be critical. If we want AI to understand the world, it must continue learning from the world, not just from itself.

References:

Shumailov, Ilia, Zakhar Shumaylov, Yiren Zhao, Yarin Gal, Nicolas Papernot, and Ross Anderson. “The curse of recursion: Training on generated data makes models forget.” arXiv preprint arXiv:2305.17493 (2023).

Alemohammad, Sina, Josue Casco-Rodriguez, Lorenzo Luzi, Ahmed Imtiaz Humayun, Hossein Babaei, Daniel LeJeune, Ali Siahkoohi, and Richard Baraniuk. “Self-consuming generative models go mad.” In The Twelfth International Conference on Learning Representations. 2023.

English | EN

English | EN