TOP 10 POST: U0026QUOT;THE NEW FACES OF INTERNET (PART 3): INTERNET OF CONTENTSU0026QUOT;

January 7, 2014

Over the holidays, we will repost the top ten most popular blog posts of the year. This is one:

The evolution of internet and its success are intimately linked to the evolution of its content. If internet was in a first place a simple ecosystem to transfer files, more interactive and user-friendly applications appeared at the end of 80’s. And what it appeared as a revolution for Internet ten years ago such as looking at television through Internet, video-on-demand or VoIP, is now the bare minimum required for a basic usage of any internet connection.

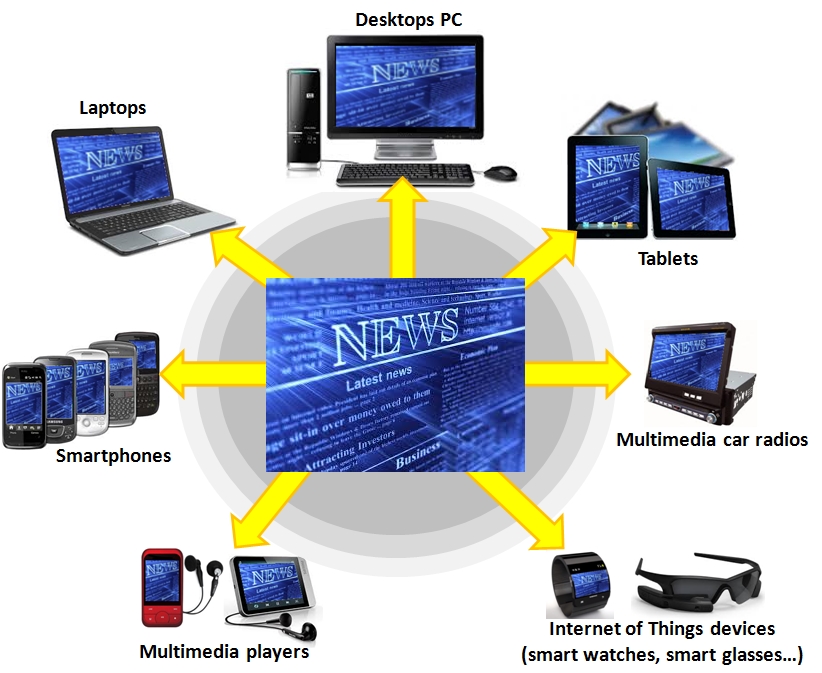

Diversification of internet contents, associated to an ever-growing numbers and miscellaneous connected devices (now fully mobile for the most of them) is creating an increasing users’ demand to be connected anywhere and anytime. But answering to this user’s demand drains some big issues to be solved.

- Contents must be natively designed to be displayed on all kind of devices (desktops, notebooks, tablets and Smartphones of course, but soon also with other kind of connected devices such as smart-watches, smart-glasses, etc), whatever the kind of network (wired or wireless) or the operating system used. This is a source of big complexity concerning the coding, storage, transport and reproduction of these contents so much different are the technical features of these devices.

• Heterogeneity of data transmission throughputs make difficult to control quality of delivered service. Controlling QoS requires a precise control of end-to-end networks flows, and it can require to set up adequate technologies or hardware, such as CDNs (Content Delivery Network, dedicated devices cooperating amongst themselves to deliver content and reducing access time to these content), or VPNs (Virtual Private Networks which allow isolation of data flows and connect end-to-end devices).

• The simultaneity of the arrival of low costs connected devices, high speed internet, and enhancement and ease-of-use of development tools dedicated to internet applications, allow any internet users today to produce its own contents in the blink of an eye. However, the proliferation of these user-generated contents are the cause of several issues, especially concerning:

o The control of the nature of the accessible content.

o The safeguarding of data, and the respect of property, laws, and reproduction rights.

o The updating of these contents, and the removal of obsolete contents.

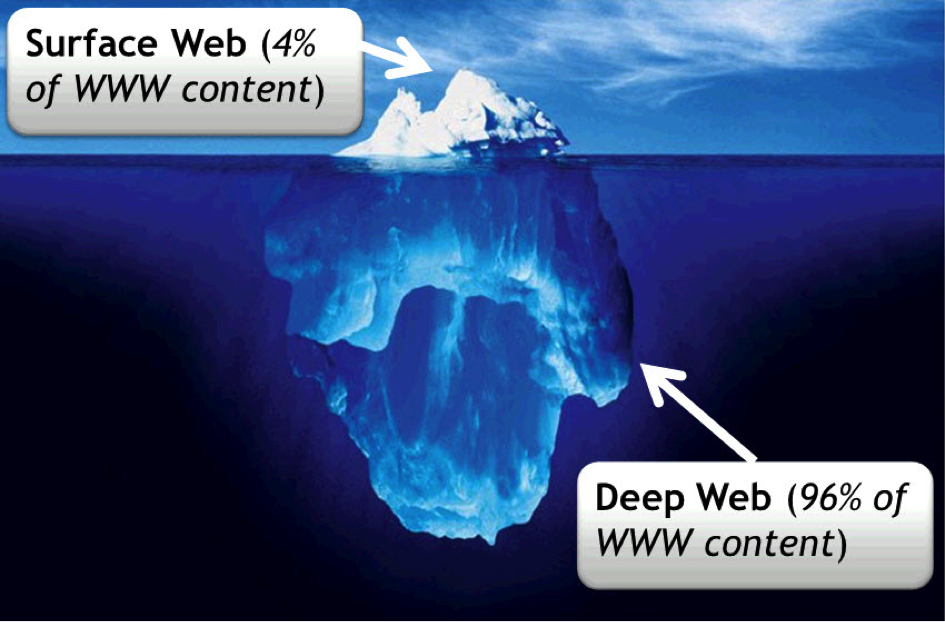

Navigating on this mass of information is more and more of a challenge, particularly when much of that content is not accessible by traditional search engines. Because Internet is not only made of web pages from sites user can access directly (such as news sites, social media sites, online retailers, government or organization sites)via links or via common search engines like Google. This “Surface web”, also called “Searchable web” or “Visible Web”, and the number of non-textual files (such as videos, images, miscellaneous files, or content stored databases) far exceeds the ‘searchable’ content. This Surface web is large at over 8 billion pages, but is only the top of iceberg regarding the size of Internet.

The “Deep Web” (also known as the “Invisible Web”, “Hidden Web”, or “Undernet”) is the mass of pages, sites, and heterogeneous data which are not indexed by Search engines, leaving the content “hidden” from them.

According to CompletePlanet:

- In the Deep Web, public information is currently 400 to 550 times larger than the commonly defined World Wide Web.

- The Deep Web contains 7,500 terabytes of information, compared to 19 terabytes of information in the “surface” (searchable) Web.

- The Deep Web contains nearly 550 billion individual documents compared to the 1 billion of the “surface” Web.

- More than an estimated 200,000 Deep Web sites presently exist. Sixty of the largest Deep Web sites collectively contain about 750 terabytes of information – sufficient by themselves to exceed the size of the surface Web by 40 times.

Even if it is considered that this Deep Web is 96% of the web content, and is hidden and not indexed, it is a necessity to index and structure the 4% remaining, which is still billions of heterogeneous data, and the permanent update of directly accessible internet contents is still a major issue.

Then efficient solutions must be found to interact with this mass of digital data: Extended request, auto-learning process, creation of a “Semantic web” to give sense to data and understand them in an automated way, etc.

Finally, obsolete data must be identified, marked and deleted. But nowadays, no solutions exist to know, on simple and/or automated way, the obsolescence state of available information on Internet.

The question related to durability of Internet content is also a major challenge: As the whole books written during Greco-Roman antiquity could be burned in a single DVD, the mass of digital information generated on Internet during the single year 2008 represents 487 exabytes (or 487 billion gigabytes, IDC study), the equivalent of 15 billions of Blu-Ray discs. And by the year 2015, it is estimated that one zettabyte (1024 power 7bytes) of content will be added to the web each and every year.

- So, how can be evaluated the value and the relevance of information produced each year on Internet?

- How can be made durable the portion of this mass of information which is relevant and have a real value?

- What process must be applied at mid-term, or at a long-term view (on the history scale)?

In the domain of content data management available on Internet, the economic and scientific stakes along with issues to solve are at the same time considerable and protean. A lot of researchers and engineers are working to find the best solutions to these issues in order to allow the most harmonious development of new contents and new services in the year to come.

And I might precisely talk on this new face of Internet in one of my next blog’s articles: The Internet of Services.

Stay tuned!

English | EN

English | EN