SERVICE VIRTUALIZATION: NEW OR OLD NEWS FOR SOFTWARE TESTERS?

November 14, 2013

Service virtualization is a method to simulate the behavior of specific (part of) applications and/or interfaces in component-based environments. It is used to provide development and quality assurance teams cheap, easy and fast access to virtualized components that are necessary for their work, but are usually unavailable or difficult-to-access.

Virtualization is, of course, not a recent concept in IT. It has been around for a while now. The virtualization of environment components used to be done by means of mocks or stubs – and is still often done in this way. This stub is a temporary version of a certain application part simulating the basic behavior of that functionality. Recently tool vendors came up with tooling providing service virtualization which enables a tester to record and model the behavior of a specific application part. No hard development work needed anymore to build such a stub (or service), but quite semi-automatic without too much scripting. Ideal for a tester to work with. So the principle can be considered as “old”, but the way to do can be conceived as quite new.more–>

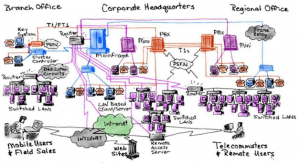

More important however is the question why this service is becoming more and more “hot” in IT and QA domains. As known, applications are no longer silos in a larger landscape, but are integrated with multiple other systems inside and outside the (technical) boundaries of the organization, using different technologies. Each of these systems is maintained by different people or teams and follows their own release planning – without a central and overall view on the status of all these different environmental components.

This complex eco-system is a nightmare for testers to be able to execute the needed tests, within a reasonable time. Testers are “naturally” more focused on the end-to-end functioning of applications, while developments teams are often organized in silos working on one or more applications, but certainly not the complete landscape. Too many technical dependencies in this landscape force the end-to-end tester to focus more on “getting it working” (with the support of others) instead of the genuine verification work a tester is expected to do. This is the perfect setting for service virtualization to boost.

Just like cloud computing, service virtualization is not a new thing from a technical point of view, but the current context of cost cutting, time to market and productivity gains has lead to a significant grow for this IT service, certainly when the tester needs to have control over this service and is no longer dependent on other agendas, planning, people, etc. In this context the business case for this service becomes more and more visible and relevant for decision makers (it was already obvious for the tester). Along with this there are of course technological improvements on the tooling to use for this service, but the concepts do exist already some time and remain basically the same.

The real change is thus the business context speeding up (or further enhancing) this technology, not vice versa. And doesn’t that change sound familiar to testers as well…?

English | EN

English | EN