The expansion of digital infrastructures has brought unprecedented computing capabilities to organizations, but it has also intensified concerns about energy consumption. Modern data centers are essential to daily operations, nevertheless, they concentrate enormous energy demand that continues to grow1. Since industry is interested in methods to operate more responsibly and efficiently, Artificial Intelligence is increasingly considered a powerful enabler of energy optimization. This raises a natural and important question: Does the energy used to train and operate AI models outweigh the energy savings they provide?

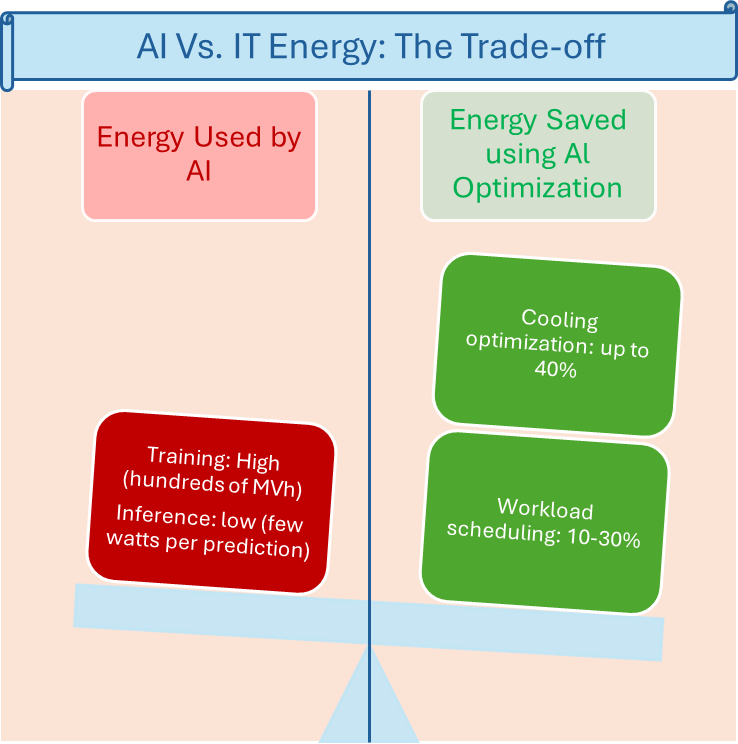

To understand this balance, it helps to examine how AI consumes energy throughout its lifecycle. The training phase of advanced machine learning models can be intensive. Training may require large datasets and repeated calculations that, in some cases, amount to tens or hundreds of megawatt-hours 2, 3. This figure can seem alarming at first glance, but what matters scientifically is the distribution of this cost over time. Training happens once. Once a model is ready, it enters a long period of deployment during which it performs inference. Unlike training, inference consumes very little energy. Making a prediction or adjusting an optimization parameter typically requires only a few watts. Over days, months, and years of continuous operation, the energy cost of inference remains remarkably small.

What transforms this picture is the scale of the savings that AI enables when integrated into IT operations. Cooling systems in data centers are one of the clearest examples. Cooling can represent up to 40 percent of a facility’s total energy consumption. AI models can sense and predict thermal conditions with a level of precision that human operators or static control rules cannot achieve. By adjusting cooling strategies in real time, they can reduce energy usage by amounts that routinely reach between 20 to 40 percent 4, 5. Comparable impacts are observed when AI is used to optimize workload distribution. By predicting demand, grouping tasks more efficiently, and identifying resources that can be powered down without risk, AI reduces unnecessary energy expenditure while improving overall system stability.

When we compare the initial energy cost of training with the ongoing energy savings produced over time, a clear trend emerges. Even models that require substantial energy to train produce savings that rapidly exceed their training cost. Once deployed, the model becomes an ongoing source of efficiency gains. Moreover, a single trained model can be reused across multiple sites without being retrained from scratch, which significantly amplifies its positive energy impact. Scientific studies and real-world deployments by large data center operators consistently show that the net energy balance of AI is strongly favorable [1], [3].

Beyond measurable reductions in energy use, AI contributes to broader environmental and operational benefits. By consuming less energy, organizations lower their carbon emissions and reduce the environmental footprint of their digital activities. More intelligent control of cooling and workload distribution also reduces wear on infrastructure, which supports system reliability and extends hardware life. These improvements have human implications as well. They reinforce the resilience of the digital services that individuals and communities rely on every day, while helping organizations take meaningful steps toward sustainability.

In summary, scientific evidence shows that the energy required to develop and run AI systems is significantly outweighed by the energy they save in return [1], [4]. When applied to IT energy optimization, AI becomes a clear net-positive technology that leads to more efficient, more resilient, and more sustainable digital infrastructures. Far from being an added burden, AI plays a constructive role in building a more responsible technological ecosystem.

English | EN

English | EN