HOW BOTS LEARN, UNLEARN AND RELEARN

July 27, 2018

How is a bot learning to recognize images?

How is a bot learning to find lungs with cancer?

What if a bot is wrong? Can it unlearn to fix it’s mistake?

Continued from Part 1 – What is a robot?

Supervised learning

Supervised learning requires humans to define the bots outputs, as categories.

For example, if the bots needs to recognize images, then a developer need to define what kind of categories the images can be put into. A bot can get 10 categories, where each category contains 100 images. The bot will analyze the images in multiple ways to find the similarities between the images in the same category and differences in images from the other categories.

There are multiple algorithms to analyze an image and the bot developer needs to figure out, which algorithms are the best for the bots purpose. To test the bot, the developer will need another 100 images, to verify if the bot categorizes the new images correctly. The problem is, that the developer doesn’t know, what similarities and differences the bot uses, and it will take time to reverse engineer the bot (if it is even possible for the complexity).

The developer can’t be sure, if a new image in the near future will be put in a wrong category – if this happens, and the error is caught, then the developer have to make the bot learn the new image type, where the new image will be included in the original learning dataset. The developer might need to change one or more of the used image-analysis-algorithms, in order for the bot to categorize the new image correctly.

Unsupervised learning

Unsupervised bots doesn’t require any categories.

For example the bot gets a 1.000 images, which it will analyze with image analysis algorithms (chosen by the developer). The bot will create its own categories, from the similarities and differences it finds in the images. The developer can then look through all the categories and see, if any of these categories can be used for a business case.

For example, a bot can get a 10.000 images of lungs and the bot creates 100 categories. The developer looks through the categories and finds out that:

- 80 % of all the images in 8 of the categories, contain lungs with cancer.

- 5 % of all the images in the other 92 categories, contain lungs with cancer.

The bot can then be used to scan for new images; if the image is put in one of the 8 categories where 80 % of the lungs contained cancer, then there is a high probability that the lungs have cancer. Again the developer doesn’t know, what similarities and differences the bot uses and therefore can’t tell how precise the bot will be in the future.

The developer can test the bot with another 1.000 images, to verify that it still can catch that many lungs with cancer. But the developer can’t be sure, that the bot will still work correctly in the near future. The photo technology might change, so the images can’t be recognized correctly anymore. It will require the developer to reset the bot and make it relearn from the new data set, while also change one or more of the used image-analysis-algorithms, in order for the bot to find lung cancer again.

Reinforcement learning

A bot using supervised learning and unsupervised learning is a heuristic. A heuristic can be viewed as a simplified world view, that can predict an outcome of an event with high probability on a minimal set of data.

For example: If a car drives 60 km/hour in average and will drive for 3 hours, then by using the heuristic speed * time = distance it can be concluded, that the car will drive 180 km. Traffic, weather or anything else is not mentioned, but could have been incorporated in the average driving speed.

Supervised and unsupervised learning are heuristic that contains noise, which the developer needs to filter out. The problem is that, the developer doesn’t know what type of noise to expect and when to expect it. Therefore the developer can’t prepare any algorithm to deal with it. The developer can try to find as much noise as he can, so he can filter them out. It is the same process, when regular testers tries to find expected and unexpected test scenarios in traditional software development. It is not possible to generate all the testing data, like how the lung photo technology will change, but it is possible to monitor it, to see when the change happens and if the change will affect the bot. If it does, the bot can be reset and set to relearn from the new data.

Bots based on supervised and unsupervised learning uses heuristics, but require a human to change it. Bots based on reinforcement learning, are not only using a heuristic, but can also apply changes to it.

A bot based on reinforcement learning contains a QA layer with Key Performance Indicators (KPI), which measures the bots performance. This QA layer is able to apply changes to the bot in the form of AB testing.

Example:

Imagine a computer screen, where one of the pixels is our bot and another pixel is the bots target:

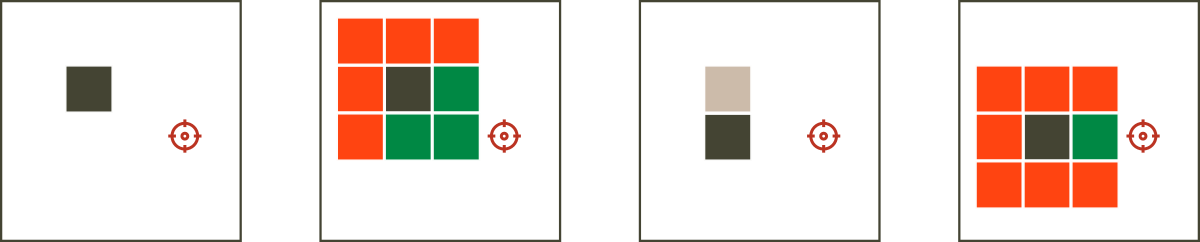

The bot could have 4 cameras (one in each direction) and a leg (so it can move in a direction). The developer would create a KPI on the QA layer, that measures the distance between the bot and its target. The bot would try to create a relation between its cameras and its leg, by trying out random changes. A change would be approved by the QA layer, if the distance between the bot and the target was smaller, while the change would be trashed, if the distance was larger.

This would be a form of A B testing, since the performance of the changed bot version (B) would be compared to the original bot version (A), where the best bot would be chosen.

Learning & unlearning

Reinforcement learning greatest strength is, that it can unlearn (forget) and relearn by itself.

For example, if the northern and southern camera switched places, then the bot would move south, when expecting to move north and the other way around. The QA layer would catch this change and try to make the bot forget the old heuristic and reinforce a new one on the new data set.

The following video is of an actual bot (black dot) following a moving target (red dot). After the bot has learned to move, two cameras a switched to confuse the bot and it is up to the bot to unlearn and relearn. I have coded the bot from scratch to prove, that a bot based on reinforcement learning can do that in real time:

Evolutionary algorithm

Multiple bots could even share the same QA layer, and not only try AB testing but ABCDE…Z testing at once, to quicken the learning. For example, a bot creates eight children, each taking a different decision. All the children bots shares their knowledge about their decisions, and only one random child, with a good decision, survives. The bot child creates another eight children to take eight different decisions, one of them survives and gets eight new children, etc.:

This would improve the learning speed of the bot by factor eight for each step. At the same time, the bot would be able to select one of best solution each time. This is how evolutionary algorithms work. OpenAI also used a parallel speedup simulations (not realtime) to train a bot to beat the best DOTA2 players.

Read more at https://blog.openai.com/dota-2/ or watch the Youtube video: https://www.youtube.com/watch?v=jAu1ZsTCA64

Continued in Part 3 of 3 – In bots we trust (coming soon)

Testing in the digital age is a new Sogeti book that brings an updated view on test engineering covering testing topics on robotics, AI, block chain and much more. A lot of people provided text, snippets and ideas for the chapters and this article (part 1 of 3) is my provision for the book, covering the chapter robotesting (with a few changes to optimize for web).

The book Testing in the digital age can be found at: https://www.ict-books.com/topics/digitaltesting-en-info

English | EN

English | EN