GENERATIVE AI FOR QEU0026AMP;T

July 26, 2023

Overview:

AI and ML are household terms already. Over the last decade, the proximity of common people to those terms has seen on a steady incline. Most of us are speaking about infusing AI into our day-to-day activities. In fact, the infusion has been so seamless that we haven’t even realized that we are already a few years into our AI journeys. For instance, YouTube on our smartphones knows what we are thinking, what we might be interested in watching. The online shopping apps know what we might need at a certain time of the year and start coming up with suggestions. Rewind 10 years back and we would not be able to think of many of these SMART additions into our lives. Such has been the impact of AI on us that these days we ask Alexa to launch a movie on an OTT platform on a TV in living room Even the revolutionary remote control seems obsolete at times. While these are the examples of things we do in our leisure time, we can’t ignore the impact of AI at work.

Generative AI (a.k.a. Gen AI) has been with us for a while, its history dates back to 1950s and 1960s but in recent times Gen AI is seen as a disruptive technology that could completely change the way we live our lives. Thanks to ChatGPT 4, this revolution is now at everyone’s fingertips. ChatGPT can write your profile for you, it can tell you nearly everything about everything. So, it’s no surprise that Gen AI has brought disruption in the way we work in the IT industry. People have been talking about automating the development activities, automated code generation etc., for a while now and Microsoft Power platform, Mendix etc., are some of the already established low-code / no-code platforms in the market. With the advent of ChatGPT, developers have been able to get automatically generated code snippets easily and this has already been observed to be quite effective. With all those proven capabilities, the application of Gen AI towards software testing becomes an interesting proposition.

Generative AI Models:

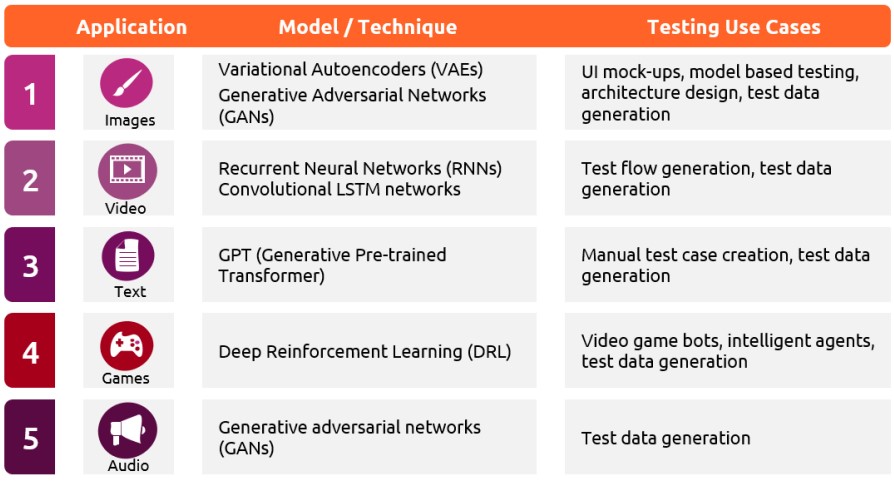

Typically, Gen AI relies on Large Learning Models (LLMs) aided by training data to generate the model output after analysing the user input. Many of us have already tried and seen ChatGPT in action but the application of Gen AI is not limited to just text-based interactions. Gen AI models can generate a wide variety of output. Below are some of them:

Generative AI in Software Testing:

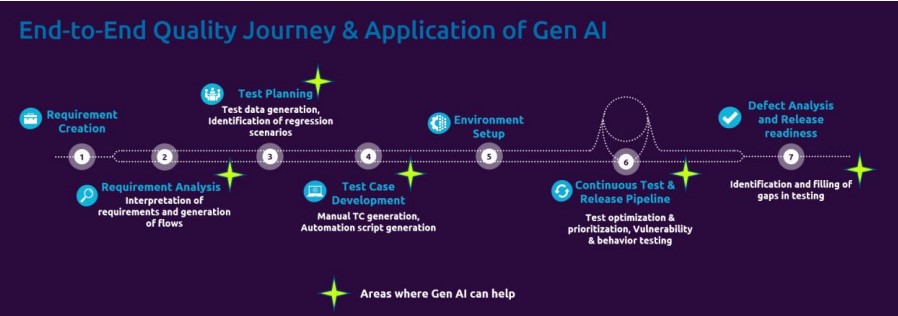

Having analysed how Gen AI works, its applicability can be seen at multiple points during the software testing journey:

- Test case generation: Manual test case generation is often a tedious and time-consuming task that all the test engineers go through. Understanding the requirements is open to an individual’s interpretation and has a direct impact on the quality of test cases generated. Gen AI can come in handy to automate this process. It can analyse the requirements, user stories, business flows etc., written in multiple formats and can produce key focus areas. Using those key focus areas, Gen AI can build models and generate test cases that focus on all the possible scenarios from those models. Non-functional requirements such as localization, usability, reliability, scalability etc., can also be covered.

- Test data generation: For the vulnerability and behaviour testing to be effective, it must be supported by diverse and realistic data. Gen AI models are capable of generating data that can cover a wide variety of scenarios by learning from previously used data and test executions. They can do so at scale too. Data security is critical and with Gen AI, data that closely resembles the production data i.e., synthetic data can also be generated to make testing the real-world scenarios possible while keeping the production data secure. This way with Gen AI, the shortcomings of the manual process which is often time-consuming and error-prone can be ably assisted with.

- Test optimization and prioritization: By generating models out of requirements, user stories etc., Gen AI is capable of eliminating repetitive paths thereby identifying the most unique paths or the paths with maximum possible coverage. Using feedback loop and past executions, Gen AI can then identify paths based on their potential impact or vulnerability. This analysis coupled with potential severity can be leveraged by Gen AI to prioritize test cases with maximum potential impact. This way Gen AI can assist with test optimization and prioritization.

- Regression test identification: In an agile world, software development is bound by timeboxed releases. With every release, the scope increases cumulatively which has a direct impact on the number of test cases to be included as part of the regression suite. With a proper change management discipline, developers can generate enough data for Gen AI to analyse the changes made to the system. This way Gen AI can identify vulnerable areas as a result of those changes and can suggest the test cases that should be included as part of the regression suite. If test cases are not available, it can also generate those to ensure full regression coverage.

- Vulnerability and behaviour testing: While manual testing looks to evaluate the application behaviour in various expected and unexpected ways, there is a limit to which the human brain can think. Gen AI can be a powerful option to generate a wide variety of expected and unexpected inputs. These inputs can be any values, file formats, data formats, network protocols etc., which might result in uncovering vulnerabilities that might, otherwise, be completely missed. By learning from previous test executions, system logs, test data etc., the Gen AI models can identify areas that might not have been adequately tested or potentially missed. Thus, Gen AI has a potential to uncover unexpected behaviours and vulnerabilities out of a software system

- Automation script generation: At the minimum, for generating automation script, the automation engineer needs to be aware of (i) The use case to test, (ii) The application to be tested. The output generated by the above scenarios can be leveraged as an input for automation script generation. For instance, there is a use case, say UC-1234, that tells the test engineer to navigate to https://www.amazon.com, enter ‘iPad’ as the search text and then click on ‘Search’ button. An input to Gen AI can be simply- Create a selenium-based automation script for US-1234. For basic scenarios like these, Gen AI does a very good job. For complex scenarios, it is difficult to get the exact desired output, but Gen AI can surely generate the basic building blocks or an approach to design the complex automation scripts. Moreover, Gen AI can also assist with basic debugging.

- Defect analysis: After every test cycle, Gen AI can be used to analyse the defects and create test cases for the defects that don’t already have any associated test cases. Similarly, existing test cases can also be amended to cover additional scenarios / steps. This is a very important use case since it uncovers the gaps in testing and makes it more robust.

Risks:

All the above potential applications and many more sound very promising but Gen AI is still far from being completely accurate. It is very important to understand that the power of Gen AI comes with some caveats, here are a few of them:

- Models are biased: This has been a well-known problem for years and yet hasn’t been addressed satisfactorily. Models can also profile the person they are interacting with and may generate person-dependent inaccurate outputs.

- They exploit loopholes: Once an AI model learns from historic data, understands patterns, it is likely that the model would identify loopholes within the systems it learns from and might exploit them. So, the output generated by them might be in conformance to the rules but invalid

- The risk of unknown: While it is very impressive the way Gen AI does its job, nobody really knows what the models actually do to get an output for an input. This mystery can be unnerving especially if the models have access to confidential information, this poses a huge data / information security risk

- Models can’t unlearn: In case a requirement arises wherein the model is expected to unlearn certain part of it’s training data due to various reasons such as information security, the only possible options are:

a. Deletion of the specific data point from the training data and retrain the model. This is a very expense approach

b. SISA (Sharded, Isolated, Sliced, and Aggregated) approach suggested by University of Toronto at the time of training wherein data is processed in pieces such that if situation arises, data points can be deleted only from required pieces and just that portion of the data is retrained

Practising Ethical AI, which requires a system to be inclusive, unbiased, explainable, built with right purpose, responsible in terms of data usage etc., can be a big challenge due to all of the above caveats and hence introducing Gen AI in business-critical operations poses a significant risk. Worse, nobody can even predict the likelihood and the extent of this risk. For example, in medical field, Gen AI might conclude on a patient’s diagnosis based partly on the patient’s background, medical history, similarity of symptoms with other patients etc., thereby producing potentially inaccurate, biased reports. Unless supervised by an expert, this might lead to disastrous outcomes.

There are many more such risks and they are being uncovered every passing day. Despite its huge potential in software testing, it is very important that human test engineers and domain experts supervise the work done by Gen AI to mitigate those risks to the maximum extent possible.

Conclusion:

In a nutshell, the human expertise it not yet outrightly replaceable, in fact it becomes even more important in making the right interpretations and taking the right decisions. Stephen Hawking once said for a reason “Success in creating AI would be the biggest event in human history. Unfortunately, it might also be the last, unless we learn how to avoid the risks.”

In my view, the human intelligence is here to stay. There is still a new learning curve for all of us to understand how we can leverage Gen AI more for its virtues rather than its still mostly unknown risks.

English | EN

English | EN

Nice Blog – E2E Gen AI …Good job Rohan