DATACENTER BITES THE DUST!

November 11, 2015

We usually approach IT matters as source of disruption in our lives but we don’t mention the effect caused by this revolution on the IT world itself. Infrastructure as we used to know is about to pass away.

It has become so cliché to talk about disruption nowadays. This past decade and this will go on for sure in the future, we heard about the irremediable impact of IT in our everyday life: internet, web 2.0, wearables, 3D print, bitcoins, IoT, blockchain… and many more. We talk about the way we now buy, pay, book, rent, sell, share… and I could go on and on.

more–>

How did the Datacenter evolve since it appeared and how it impacts us – IT professional – today and for the next decades?

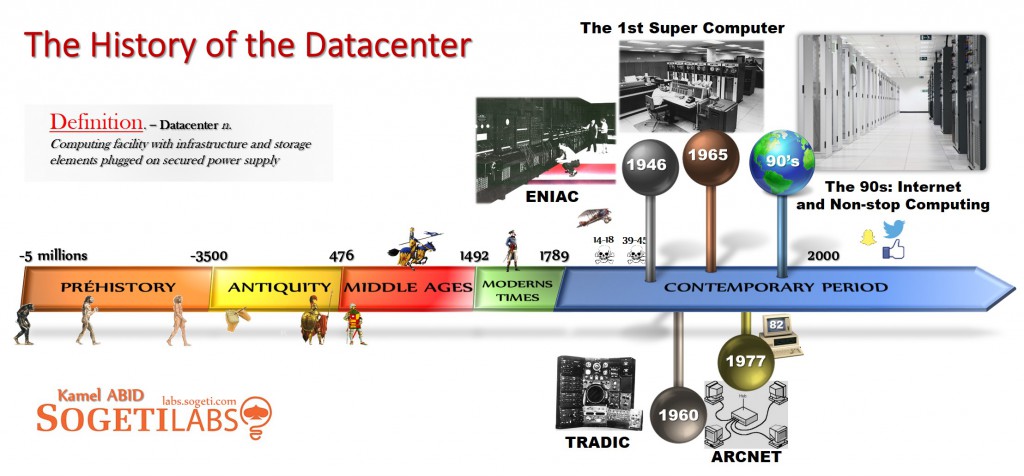

Before answering the question, let’s first agree on a short definition of the word Datacenter. It is a computing facility with infrastructure and storage elements plugged on secured power supply. Therefore, we can consider ENIAC as the grandfather of the datacenters we know today. ENIAC was built by the US Army to store artillery firing codes. No other computer had comparable storage and calculation capacity in history. The entire installation required more than 160 square meters. It was a great hardware innovation. A great industrial innovation as well.

Following ENIAC, was TRADIC. It was built in 1954, also in the US. TRADIC is the first real commercial application of datacenter concept, and reached real success in the 60’s. Prior to TRADIC, mainframes were mainly built for government and military purposes and from this point, IT was finally open to companies as well.

Then, came the CDC 6600. It is remembered as the first Super Computer. This next-generation system claimed a speed of 100 nanoseconds, based on 24-bit architecture and was the first to include a CRT console. Yes another Hardware innovation. To possess one CDC, you still had to pay no less than 8 million dollars. Only big players could afford such expense.

Finally, ARCNET. We can consider ARCNET as the ancestor of the Internet. At this time, the revolutionary idea was to connect computers to a shared floppy data drive via coax cables. After that point, TelCos entered the innovation tracks. And a couple of years after, came the PC. Mainframes were extremely expensive and required enormous resources in space, operation and cooling. So to introduce IT for more and more companies (not only for majors) IBM designed the first recognized PC in 1982. Companies worldwide began deploying computers throughout their organization and saw a significant benefit in computers, IT penetration rate started to take off forever.

What we have seen so far, regarding datacenter history and evolution, is that the driver was “hardware innovation”.

The schism in the IT infrastructure paradigm

We – IT guys – used to reign within companies, coached by hardware brands and innovation in the past. We used to explain how important it is to have full 100Mb/s network or SCSI external storage… We used to dictate what is compatible with what.

But in the 90s the IT penetration rate increased also in the consumer world, so we were not the only ones to think we understood IT anymore. The end-users was becoming more and more powerful.

The Internet which reached an inflection point toward mass-market adoption in the mid-1990s, brought a feeding frenzy to datacenter adoption in the late years of the 90s. As companies began demanding a permanent presence on the Internet, the data center as a service model became common for most companies.

But the most important change that describes this schism is that we don’t care anymore about infrastructure and I’ll explain it further more.

While hardware innovations were the gasoil of ours changes in the first half-century of Datacenter’s life, today we reached enough maturity to wonder “Why do we go for this innovation on IT”. I mean, IT guys are no longer dictating what tools must be used. Nowadays, the newly empowered users are mature enough to define their IT needs and it’s their turn now to dictate their wishes.

“I want to be able to work anywhere, at any time and don’t try to explain to me it’s not compatible because today everything is compatible with everything. From anywhere, I can book a house in Honolulu on Airbnb either on my Android tablet or on my office computer, even from my TV and to authenticate I just use my Facebook account and you try to explain to me why our CRM is not compatible with our email system and that it is normal to juggle with tens of passwords! Come on! You IT guys need to escape from prehistory.”

Honestly,

… are you able to give me the brand of hardware which hosts your most critical professional data?

And do you know if it is a SCSI, IDE, SATA II, III, virtual or whatever technology used to store your data? We don’t have a clue (even us, IT guys), and we just don’t care.

The Cloud is here and the mutualization of IT resources as well and it concerns both private and public visions.

On top of this, SDN and containers functionalities are coming with the version of Windows Server 2016. Respectively, the first feature will bring for everyone the capability to move IT services from OnPremises to any cloud provider with no outage and almost a drag & drop. The second feature will make developers free to code regardless of backoffice frameworks and frontoffice device/browser that will be used.

I have worked in IT within business world for more than 20 years and I see the shift on the definition of IT strategy. We used to follow innovation from manufacturers to bring additional capacities and capabilities to the business and we were progressively escaping from hardware considerations, from brand considerations, network and location considerations. With the rise of web-bases apps, we are now even escaping from end-user OS and devices. On top of that, dockers and containers are coming and we won’t even care about DB, storage, deployment tools underlying anymore.

IT Infrastructure, as we used to know it, is dead. Does it mean that our business is too? Hell no!

And that will be the topic of my next article. Stay tuned to Sogeti Labs.

English | EN

English | EN