BIG DATA AND TRENDS ANALYSIS: BE AWARE OF THE HUMAN FACTOR!

July 22, 2013

At the beginning of 2009, Google researchers published in Nature [1] the results of a new service called Google Flu (http://www.google.org/flutrends) . Simple and nice idea: if somebody has some flu symptoms, he will search the web for words like fever, headache, flu, doctor, etc… By analysing frequency of some chosen words, we can follow how flu is expanding through one country or through the world.

At the beginning of 2009, Google researchers published in Nature [1] the results of a new service called Google Flu (http://www.google.org/flutrends) . Simple and nice idea: if somebody has some flu symptoms, he will search the web for words like fever, headache, flu, doctor, etc… By analysing frequency of some chosen words, we can follow how flu is expanding through one country or through the world.

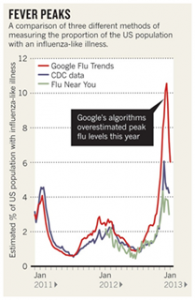

Since 2012, the mathematical model has been working perfectly well. Each year, Google, with CDC (Center for Disease Control and Prevention) people improved it. But last winter, everything went wrong, as you can see in the hereafter diagram from Nature and Google Flu trends (see article [2]). The algorithm overestimates the epidemic by a large factor.

According to an article written in Nature [2] at the beginning of this year, the explanation is… the marketing campaign of Google for this service and advertising about Google ability to improve the health of all citizens by using Google Flu! Thank to this campaign, all people went to Google and searched for keywords about the flu, which has derailed the entire system.

Nature published a few weeks ago a new article [3] about trends in financial market led by the frequency of search performed by web users. Is this the new martingale to predict the future of financial markets using Google? Not really.

As for Google flu, the ability of people to integrate the existence of mathematical models with their rules (even very complex ones), leading the failure of these models, is a major problem in finance. Three economists demonstrated in 2010 (see [4]) how banks have hijacked the models for calculating risk during subprime crisis: at the beginning, each bank grants or does not grant loans independently. By observing these data, it is possible to measure the risk of a loan, based on some key criteria. But then, instead of keeping the same behaviour, banks started to lend solely on the basis of these criteria coming from the model. Finally, the use of statistical models has led to a systematic underestimation of credit risk as the risk assessment itself was done using a limiting and same set of criteria.

Conclusion: do not rely on a single model to measure risk taken by financial players, because they will eventually adapt to it. Generally speaking, no model alone can predict something that depends on human behaviour. Or in other words, machine must learn that humans can learn and adapt to go beyond the rules (and quite quickly!).

References:

[1] http://www.nature.com/nature/journal/v457/n7232/pdf/nature07634.pdf

[2] http://www.nature.com/polopoly_fs/1.12413!/menu/main/topColumns/topLeftColumn/pdf/494155a.pdf

[3] http://www.nature.com/srep/2013/130425/srep01684/pdf/srep01684.pdf

[4] Rajan, Uday, Seru, Amit and Vig, Vikrant, The Failure of Models that Predict Failure: Distance, Incentives and Defaults (August 1, 2010). Chicago GSB Research Paper No. 08-19 (http://econ.as.nyu.edu/docs/IO/17025/Seru_20101117.pdf)

English | EN

English | EN