Why are some organizations embracing new modern ways of approaching data, test automation, tools, planning and quality assurance – and some are not? What are the underlying mechanisms that is holding us back? I am confident that the answer to this question lays within us as individuals and our collective mental models as an organisation and that the Cynefin framework can provide a piece of this puzzle.

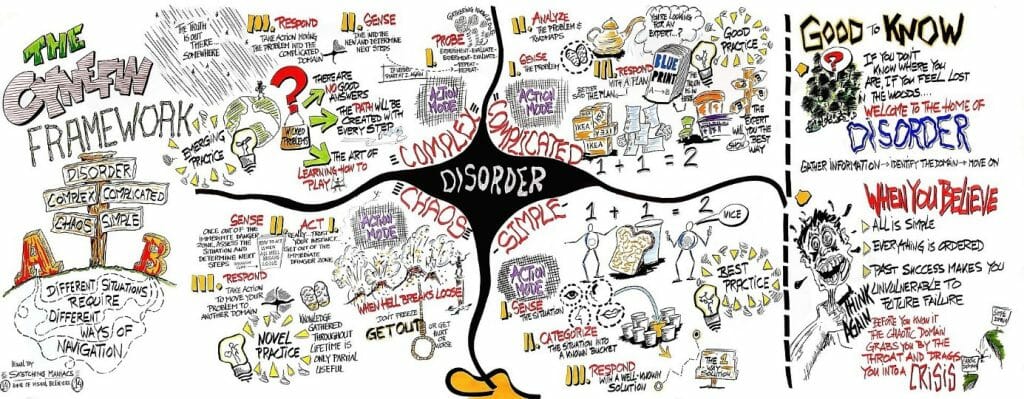

The Cynefin model divides problems into four major domains – Simple, Complicated, Complex and Chaos. Simple problems are like obvious math problems where we know that there can only be one correct answer and that is clearly stated in the back of the book. Or in a requirement or design specification. The domain of Complicated is a category of problems like building a skyscraper where there could be a blueprint where an architect, an expert, has documented the instructions to put it together but building it takes a lot of planning and coordination. A complicated problem is therefore deterministic and something we as humans tend to address and solve through – what I would refer to – a mechanical approach.

However, a complex problem on the other hand is a problem where the solution is not deterministic, we don´t know how the successful outcome might look like in the end. In a complex system everything is entangled with everything else so you get no repetition, you get high levels of uncertainty – but what you can observe is patterns and you can respond to these patterns. Therefore, plans and blueprints are not as valuable in a complex problem. We must probe reality continuously; monitor and analyze the data to make conclusions and act upon them and study the consequences. This is how we relate to organic problems, how we as humans approach science in various forms and healthcare as an example. The fourth category, Chaos, is just as it sounds – when there are no visible constraints, all our preconditions get turned upside down, we endure an environment that is highly disruptive, it scares us and leads to panic as if a natural disaster would happen. Even though this is exactly how it might feel in large development projects, we won’t go into that topic in this article.

The Cynefin framework has made me reflect upon how one seemingly small and simple change in our development landscape can of course raise obvious questions (did we implement it as we intended), but also complicated question (how did this change affect other parts of our system landscape) and complex question (did we reach the intended value we pursued for our business, customers, users?)

The problem I´ve stumbled into related to the Cynefin framework is that the words ‘complicated’ and ‘complex’ is easy to intertwine. Therefore, I often tend to refer to it as a ‘mechanical’ and ‘organic’ approach to problem solving instead, resulting in a clearer understanding of what we are trying to achieve and lesser misconceptions.

Illustration below of the Cynefine framework by Edwin Stoop, more info on the difference of complicated and complex in this article.

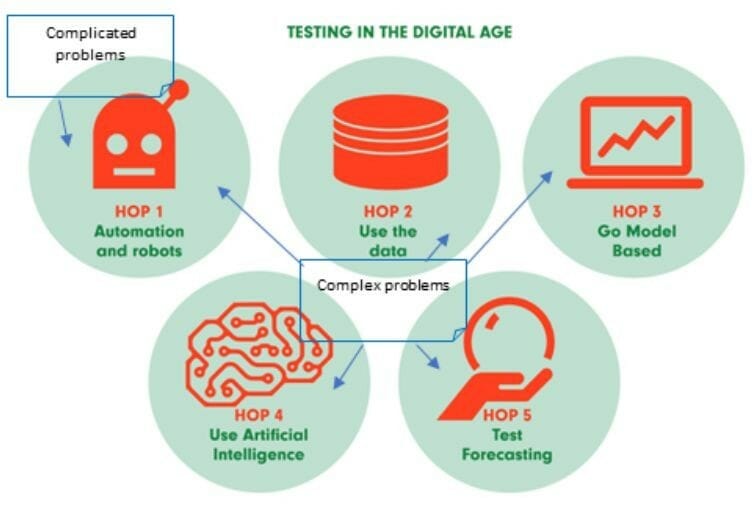

A model presented in the book Testing in the digital age – it’s all about AI presents a five-step maturity model that the industry expects to go through in five defined hops in the digital age:

Hop 1: Automation and Robots

Hop 2: Use the Data

Hop 3: Go model based

Hop 4: Use Artificial Intelligence

Hop 5: Test Forecasting

The problem I’ve experienced is that there is no or very little incentives whatsoever into going anyway further than the first step with automation and robots if we only see software development as something simple and perhaps in some cases complicated in the Cynefin model. In order to look deeper into what automation, data, and predictive algorithms can bring to the table, we must embrace that we operate in a complex setting. Otherwise, we will get stuck in thinking that if we just determine the optimal plan and process, we can execute (with the assistance of automation) and reach our goals.

Because – why should we waste time and resources on developing advanced data-assisted algorithms and predictive analysis if our solutions could be built just by having a solid blueprint, processes, and plans?

This means that in the topic of testing and automation we will forever stuck into the limited view at our craft as something to show how well we can stick to our pre-defined plan. By just asking the right people (the experts) about how to solve the problem we will be able to derive a plan, a recipe for success.

However, when dealing with a complex system, and looking at the definition of such according to Professor George Rzevski:

A complex system is characterized by a set of seven features that connect interacting components, often called Agents (or in our case often called syb-systems, components or microservices):

INTERACTION – A complex system consists of a large number of diverse components (Agents) engaged in rich interaction

AUTONOMY – Agents are largely autonomous but subject to certain laws, rules or norms; there is no central control, but agent behavior is not random.

EMERGENCE – Global behavior of a complex system “emerges” from the interaction of agents and is therefore unpredictable.

FAR FROM EQUILIBRIUM – Complex systems are far from equilibrium because frequent occurrences of disruptive events do not allow the system to return to equilibrium.

NONLINEARITY – Nonlinearity occasionally causes an insignificant input to be amplified into an extreme event (butterfly effect)

SELF-ORGANIZATION – Complex systems are capable of self-organization in response to disruptive events

CO-EVOLUTION – Complex systems irreversibly co-evolve with their environments.

When we look upon numerous self-organizational microservices that interacts autonomously in an emerging context of disruption they will inevitably form a complex system in the pursuit of user value. And if we look more closely on Prof. Rzevski’s definition we start to understand that it could be applicable on both our system development landscape and on the “system” that represents the human body. When we as a software development organization combine components that interact in order to create an awesome user experience, it will inevitably form a complex system in a way that resembles how organs in our own body interacts to enable us to become what we like to refer to as ‘being human’.

What the definition also clearly states are that “Global behavior of a complex system “emerges” from the interaction of agents and is therefore unpredictable.”. This means that despite what people might say in the industry, End-2-End testing is to be seen as a complex task and treated as one. The outcome of an end-2-end test scenario cannot be seen as a truly deterministic, or a mechanical, process since we continuously suffer from disruption, co-evolution and we will never reach a state of equilibrium in the system. (Which is the explanation why so many end-2-end test automation project fails)

Hence – we need another way to approach end-2-end testing in the industry. Therefore, I often talk about the importance of setting up continuous simulations to pick up on patterns in the behavior of the system as an approach to answer complex test queries in later stages of development. And isn´t this the true meaning of our test environment – to mimic reality? And – guess what – reality is never still, nor static. It is vibrant, constantly evolving, disruptive and this needs to be replicated into our test environments. Why not start by using events and signals from production to stimulate an ongoing automated production-like test scenario in our simulated (test) environment where we are able to induce changes in the same and study the consequences? This is the basic idea of the digital twin applied to software development domain.

But our ability to become successful in generating these production-like simulation lays in our ability to create a faked reality. What if we can create a simulation that is so production-like that it is impossible to distinguish from our real production environment? This is the reason why I find the research in game development, subjective perception, fake news & misinformation, metaverse and deep fake interesting to follow as a QA professional. These capabilities will become highly useful soon when our craft require us to create production-like simulation to support our controlled experiments in safe environments.

What if a developer in the auto industry would pick up a VR headset after they committed their last source code and enter the role of a truck driver in a simulated reality where this feature instantly is compiled into the virtualized truck and experience the solution first-hand? This, simultaneously with a test automation framework executing a vast number of various simulations and calculations to provide a thorough report on the prognosis related to the success of this change to be sustainable. That is a real test environment if you ask me.

English | EN

English | EN