Artificial intelligence being built into mobile apps has been a trend building steam for a few years now, making smart phones smarter than ever. AI is being incorporated into many different technologies and industries, which leads to mobile apps and mobile app features that would have been nearly impossible to create a decade ago.

Microsoft is on the front lines of this revolution and has recently announced a number of new features and services powered by AI. For the past 3 years Microsoft has surfaces many of its AI capabilities through a set of APIs and SDKs which they call Microsoft Cognitive Services. These services leverage AI to tackle specific use cases and to offer highly customizable platforms for developers to use.

It looks to be another awesome year for new AI powered services and creative approaches to incorporating these services into mobile apps.

Cognitive Services Lab

In addition to recently announced Cognitive Services coming out in Preview and GA, the Cognitive Services Lab was created last year to give developer an early look into experimental AI powered tools that Microsoft is working on right now.

Ink Analysis

Microsoft already lists a number of services that offer OCR capabilities, so what happens when you marry OCR and image recognition with artificial intelligence? One answer to that question is Project Ink Analysis.

Ink Analysis can recognize handwriting in 67 languages as well as various shapes, but it also brings more intelligent features to the mix such as recognizing the structure of writing (bullet points, paragraphs, how words relate to each other). When your mobile users write or draw in your app, leveraging Ink Analysis would allow you to make sense of your user’s content or beautify it into perfectly aligned sentences and shapes while keeping the same look and size of the original drawings.

Conversation Learner & Personality Chat

Chat bots are a popular trend that will continue well into 2018. Another trend in 2018 is the difficulty of training these chat bots to act and sound like a human. Two experimental projects that have the potential to make the training process easier and more effective are: Project Conversation Learner and Project Personality Chat.

The Conversation Learner uses machine learning to extract patterns from example conversations and then uses those patterns to write conversation logic for you. It allows subject matter experts, business stakeholders, and anyone else to contribute example interactions which will improve the models used to power a chat bot or other interactive agent.

Making a chat bot that simply provides an appropriate response is always the basic goal but giving the chat bot a unique personality to fit the situation or the company should be what we actually strive for. Project Personality Chat can improve existing models by sprinkling in small talk from a curated list or by generating them in real-time. The small talk responses can be aligned with a chosen personality which can be customized based on the context of the conversation.

Cognitive Services Updates

Custom Vision

Computer Vision identifies locations, objects, and text

Smartphone users are still obsessed with taking pictures and mobile apps are consistently finding new ways to incorporate and use those pictures. Microsoft’s Custom Vision service gives your app an intelligent way to recognize, categorize, and pull data from images using vision models. Custom Vision is not new, but it was recently gifted the powers of object recognition.

After proper training, Custom Vision will return the precise location in an image where it found a recognizable object. This newly found ability along with its existing functionality can support a number of cool features to incorporate into a mobile app.

Microsoft Translator Android SDK

A common pain point felt when using most of the Cognitive Services in a mobile app is the dependence on a good internet connection throughout most of the experience. Imagine wanting to create an app that provides AI powered translation services in remote parts of the world and that can be run on devices without a dedicated AI chip.

This is now a possibility for Android developers with the Translator local feature. The local feature uses offline language packs when a device loses its internet connection. This opens the translation service up to a much broader market of apps that run on common device CPUs and that require offline functionality.

Microsoft plans to continue to make large investments in Artificial Intelligence, finding new ways to enhance their existing products. For Microsoft, this year’s trend appears to be attempting to bring AI powered solutions to edge devices, running them on smaller and smaller CPUs. If this holds up as true, be ready to see some pretty amazing uses of AI on your smartphone.

Cognitive Services API Demo

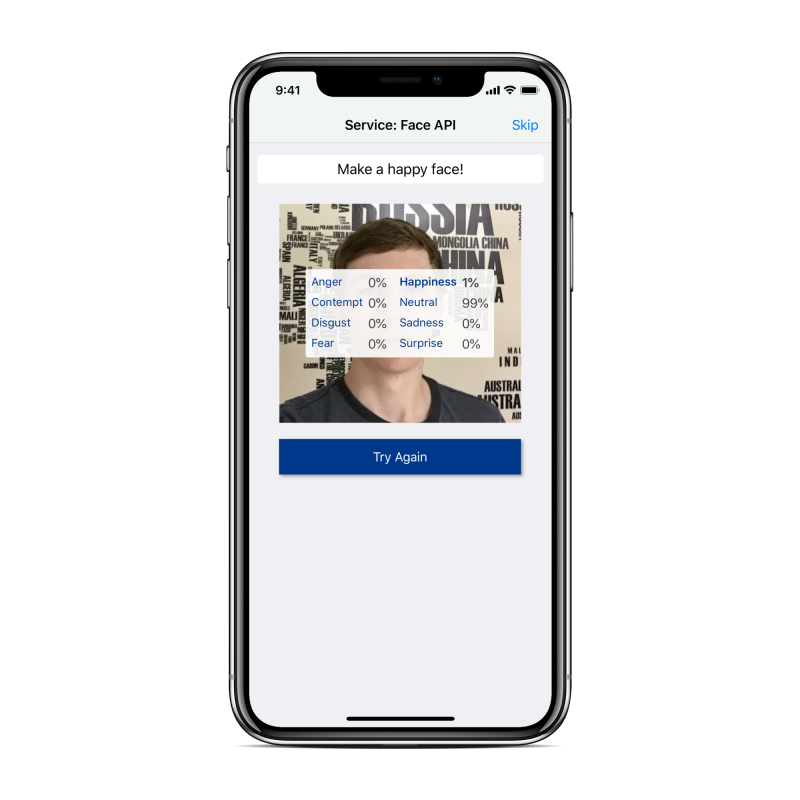

I created a Xamarin Forms application to demonstrate a few simple examples of a mobile app incorporating Cognitive Services. The application runs on iOS and Android and interfaces with 3 different Cognitive Services: Faces API, Computer Vision, and Text Analytics. The app asks the user to take a picture of their face, write a sentence, or take a picture of anything and then displays information about the image or text.

Cognitive App Screenshot – made using mockuphone.com

If you would like to download source code and work with it yourself, head to the GitHub link below. Be sure to look though the readme file for help setting up the app.

https://github.com/hvaughan3/Sogeti-Labs-Cognitive-App

English | EN

English | EN